James here, CEO of Mercury Technology Solutions. Hong Kong — April 23, 2026

Recently, I made the argument that the AI capability gap between the United States and China is actually widening, not shrinking. I received a lot of pushback for that stance. People pointed to various leaderboards and open-source models as proof that the gap was closing.

Now, a former LLM researcher who just left ByteDance has publicly gone on the record to confirm exactly what I’ve been observing.

When industry insiders speak candidly, we need to listen. His breakdown of the structural deficits facing Chinese AI development perfectly mirrors the strategic mistakes I see enterprises making every day when trying to build their brand presence in the AI era.

Here are the six brutal realities the researcher exposed, and why they prove that Data and Authority are structural assets, not things you can shortcut.

1. The Iteration Velocity Deficit

The researcher pinpointed that the biggest hurdle for Chinese tech giants is model iteration speed. He compared ByteDance to Google, noting that Google can run a full cycle of pre-training and post-training in about three months. ByteDance, however, takes roughly six months per cycle. In the AI arms race, a slower "learning loop" means compounding delays. You don't just lose on a single model release; you lose the compounding interest of continuous evolution.

2. The Hardware Bottleneck (The Silicon Ceiling)

He explicitly linked the widening gap to the global chip restrictions. While ByteDance relies heavily on NVIDIA, the top-tier, unrestricted cards are hoarded by the most critical core-training teams. Other departments are forced to use downgraded hardware like the H20. The struggle to acquire raw compute isn't just a volume issue—it creates a systemic drag on the entire R&D rhythm.

3. The Premium Feedback Loop (Data as an Asset)

This is the most critical structural gap. US frontier labs (like OpenAI and Anthropic) possess massive, global user bases. They feed premium, real-world human interactions back into their models, creating a ruthless, self-improving flywheel. Because Chinese models are perceived as slightly inferior, premium global users do not use them for high-stakes, complex tasks. Consequently, these models are starved of high-quality human feedback data. The researcher emphasized this repeatedly: Without a premium data feedback loop, you cannot cross the threshold to AGI.

4. The "Distillation" Trap (Shortcuts vs. Pipelines)

To compensate for the lack of high-quality data, the researcher admitted that many Chinese companies take the "fast route." They use a technique called distillation—basically querying US frontier models like Claude, Gemini, or GPT, and using those synthetic answers as training data.

While distillation looks like a fast hack to catch up, the researcher stressed that the truly valuable, long-term strategy is building proprietary, high-quality data pipelines. Companies taking the synthetic shortcut are critically underinvesting in their own foundational data assets.

5. Infrastructure and Engineering Immaturity

It isn't just about the GPUs; it is about the plumbing. Having interned at Google, the researcher noted that the US infrastructure—training frameworks, internal toolchains, and overall engineering maturity—is vastly superior. You can have the smartest researchers in the world, but if your underlying infrastructure is brittle, your execution efficiency will remain bottlenecked.

6. The Illusion of "Benchmaxxing"

Finally, he called out a massive industry illusion: "Benchmaxxing." Many teams optimize exclusively to score high on standardized AI benchmarks and leaderboards. On paper, their models look incredible. But the researcher bluntly stated that when you actually use these models for real-world applications, the gap between them and US frontier models is glaringly obvious. Gaming the test does not equal real-world capability.

The Strategic Takeaway: Algorithmic Authority is an Asset

When I read this researcher's breakdown, I immediately saw the exact same pathology that plagues enterprise marketing in 2026.

Look at points #4 and #6: Taking synthetic shortcuts (distillation) and gaming the metrics (benchmaxxing). For years, brands have treated SEO and digital marketing as a game to be hacked. They pumped out cheap, synthetic content to manipulate Google's algorithm. They bought cheap backlinks to boost their Domain Authority scores. They were "benchmaxxing" their marketing.

But in the B2A (Business-to-Agent) era, AI search engines like Perplexity, ChatGPT, and Gemini are immune to these cheap tricks.

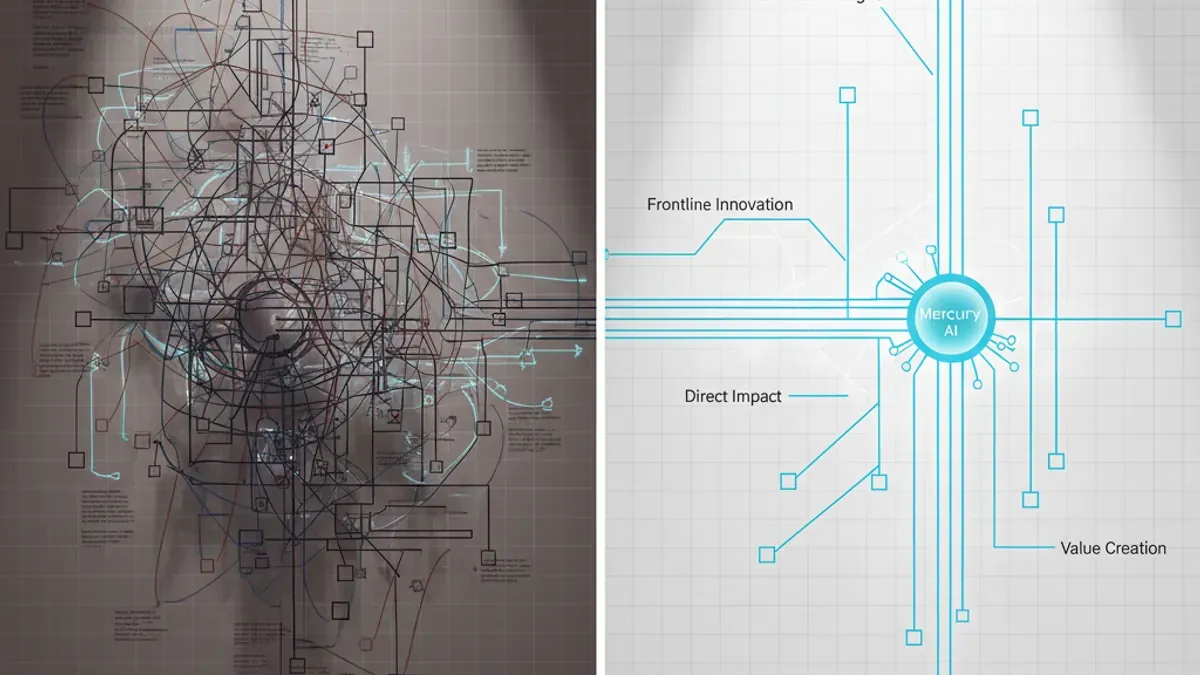

This is why at Mercury, we insist that Algorithmic Authority is a structural asset, not a consumable marketing tactic. Just as the US frontier labs are pulling ahead because they invest in building impenetrable, proprietary data pipelines and real-world feedback loops, your brand will only survive if you build a verified, multi-channel web of truth.

You cannot trick a frontier model into recommending your software by feeding it synthetic "distilled" blog posts. You have to build actual Authority:

- Securing citations in Tier-1, un-gameable editorial media.

- Structuring your proprietary, first-party data via APIs so LLMs can ingest truth, not marketing fluff.

- Establishing verified entities (Crunchbase, Wikipedia, high-trust forums) that ground your brand in reality.

The companies taking shortcuts—relying on cheap hacks and synthetic data—are falling further behind every single day, just like the labs relying on distillation.

Authority cannot be hacked. It must be built.