Hong Kong, March 21, 2026

I spent yesterday afternoon scrolling through traffic reports with my coffee going cold. Three clients called. Two marketing directors sent messages that were just screenshots of Google Analytics—those familiar cliff-drop charts we all dread. Same story, different companies: March 2026 Core Update hit, and their organic traffic didn't just dip; it evaporated.

Here's the uncomfortable part: when I looked at their content calendars, I saw exactly what I'd been doing eighteen months ago. Hire writers, target keywords, use AI, publish fast, repeat. It wasn't bad strategy. It was logical strategy. It worked until it didn't.

What Actually Changed

The Google March 2026 core update wasn't a tweak. Semrush recorded volatility at 9.5/10—one of the highest readings they've ever tracked. Affiliate sites took a 71% hit. AI content farms lost 60-80% of their traffic overnight.

But here's what caught my attention: the SaaS blogs got hit too. Not just the obvious spam. The "legitimate" ones. The Series B companies with proper editorial workflows and Grammarly Premium accounts. Their content moats—the ones they spent quarters building—started leaking.

Google didn't announce they were targeting AI content. They announced they were targeting unoriginality. And most of us have been accidentally producing exactly that.

The 3-Second Rule (My Coffee Shop Test)

I have a simple heuristic now. When I'm reviewing a draft, I ask: "Could an LLM write this in 3 seconds while I order my latte?"

Not "Is this AI-generated?"—that's the wrong question. Plenty of human-written content fails this test. The question is: Does this contain anything the internet doesn't already know?

If your article summarizes five other articles that summarize ten other articles, if it offers no operational scar tissue, no proprietary data, no perspective that existed because you wrote it—then Google's new math considers it noise.

The AI Citation Problem Nobody's Talking About

We tend to think of Search and AI Overviews as separate channels. They're not. Same quality signals feed both.

When Google elevates original research in traditional search, those URLs become the training citations for Gemini, ChatGPT, Perplexity. When Google buries your rehashed listicle, it doesn't just lose ranking—it loses existence in the AI's worldview.

This isn't just an SEO problem. It's a brand authority problem. If AI assistants can't cite you, you become invisible to the next generation of decision-makers who don't browse—they ask.

How We Fixed Our Own Content (The Stack Test)

At Mercury, we stopped fixing individual posts and started auditing our system. I use three layers now—brutal, but necessary:

Layer 1: The Data Test

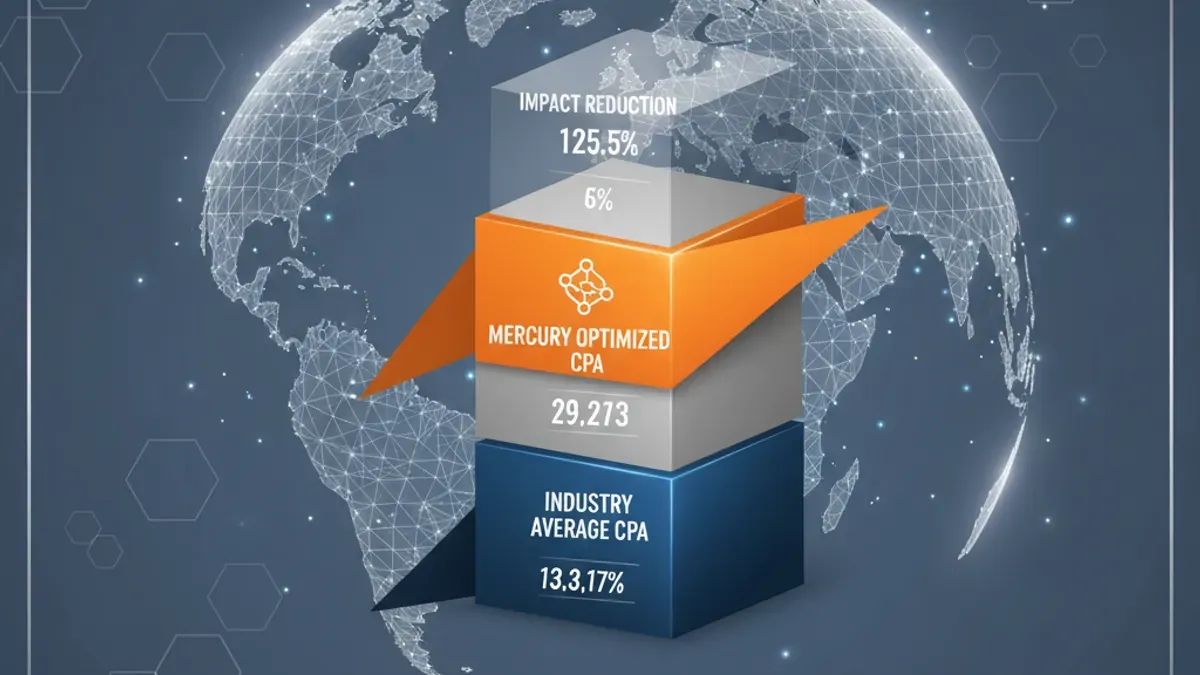

Does this page contain something an LLM literally cannot know? Not "insights"—data. Survey results, benchmark numbers, cost-per-acquisition figures from actual campaigns, failure rates from deployments. If it's information that exists only because we collected it, it passes.

Layer 2: The Scar Tissue Test

Is this written from operational experience, or desk research? Can the author describe the smell of the server room, the specific Excel error that crashed the migration, the client's exact objection on the third call? Theory fails. Specificity wins.

Layer 3: The Opinion Test

Does this piece take a stand that might alienate someone? Or is it safely hedging to "it depends"? Content that tries to please everyone signals algorithmic neutrality. Content that risks disagreement signals human judgment.

I ran our top 20 landing pages through this last month. Most failed Layer 1. The survivors usually failed Layer 2. Only our case studies, our failed-project postmortems, and our occasionally controversial leadership essays made it through all three.

That's not a content problem. That's an identity problem.

What We're Doing Instead

We're publishing deeper since Oct 2025. One research piece with data you can't find elsewhere is worth more than fifty keyword-optimized explainers. We're trading breadth for vertical depth, aggregation for origination, safety for specificity.

The irony? It's actually easier. Writing your 47th "Ultimate Guide to LLM SEO KPI Metrics" is exhausting. Documenting the specific lesson from last Tuesday's deployment failure/ success is just... honesty.

A Thought for Your Weekend

If you're looking at your traffic charts today and feeling that panic, I get it. But don't rush to rewrite your old posts. Ask instead: What do we know that nobody else does?

Start there. The rest is just formatting.

James HuangCEO, Mercury Technology SolutionsAccelerate Digitality